Distributed Systems Engineering for Robust and Scalable Solutions

Abstract

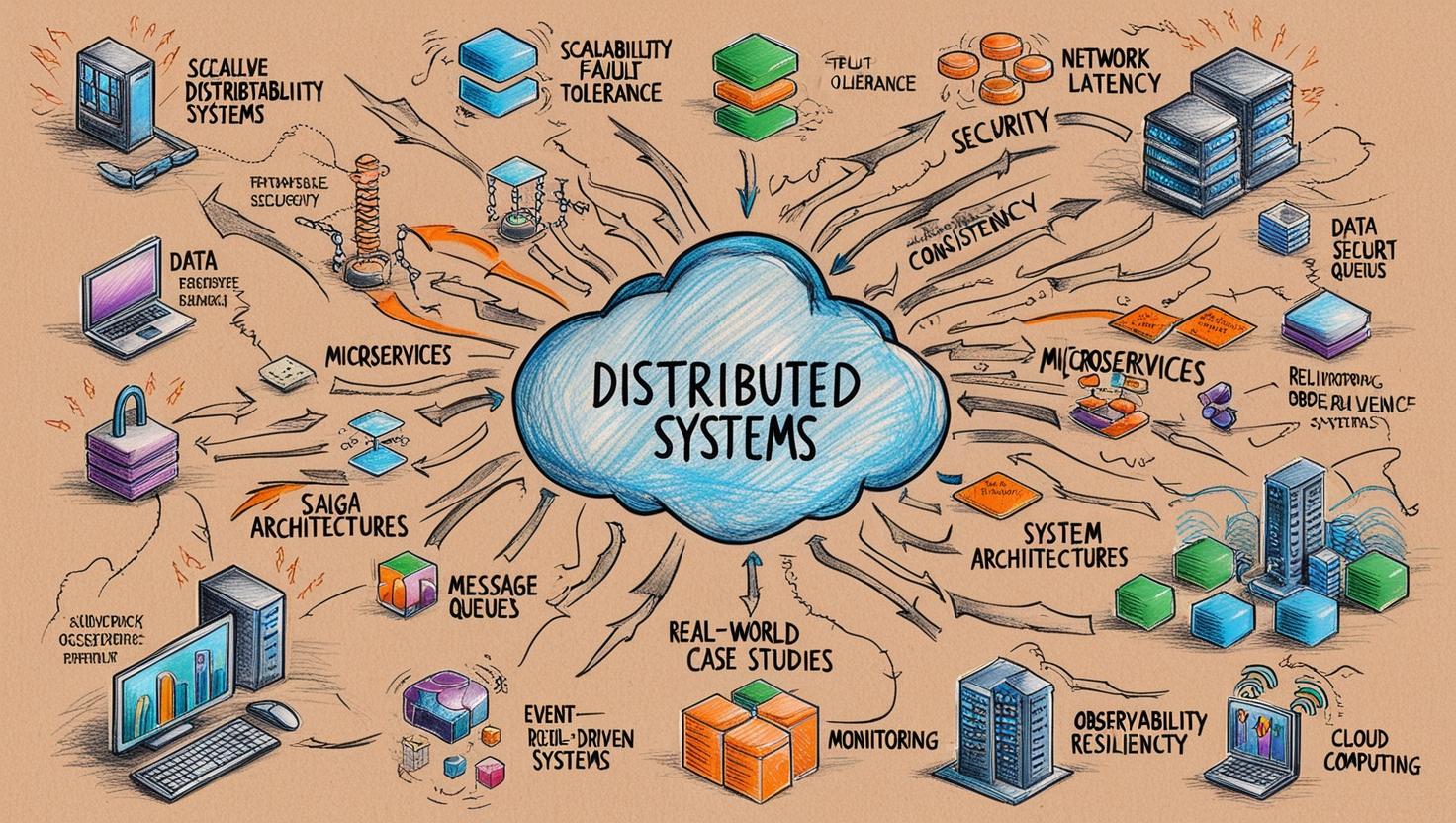

Distributed systems underpin today’s interconnected digital ecosystems, enabling scalable, fault-tolerant, and resilient architectures across diverse industries. This study delves into the engineering of distributed systems, exploring foundational principles such as data consistency, fault tolerance, and network partitioning, alongside advanced patterns like CQRS, Saga, and event-driven architectures. It examines the role of critical tools and technologies—including service meshes, message brokers, and orchestration frameworks—in managing complexity and ensuring system reliability.

Emerging paradigms such as serverless computing and AI-driven optimization showcase the continual evolution of distributed systems, while real-world case studies from industry leaders like Airbnb, Netflix, and Uber highlight practical approaches to scalability, performance, and resilience. By addressing critical challenges such as observability, security, and latency, this study equips engineers with actionable strategies and cutting-edge tools to design distributed systems that meet the demands of dynamic, global-scale applications.

Index Terms: Distributed Systems, Scalability, Fault Tolerance, Data Consistency, Network Latency, Security, Microservices, Message Queues, Saga Patterns, Real-World Case Studies, System Architectures, Event-Driven Systems, Monitoring, Observability, Resilience, Cloud Computing.

Distributed Systems: The Why and the How

Distributed systems are the cornerstone of modern computing, transforming the way applications are designed, deployed, and scaled. At its core, “distributed” refers to a model where computational tasks, data storage, and processes are dispersed across multiple interconnected machines. This decentralization ensures that no single machine serves as a bottleneck or a single point of failure, enabling systems to handle higher loads, maintain availability during failures, and perform computations closer to where data resides.

Why Shift from Single Machine to Distributed?

Historically, computation began with single machines—self-contained systems that executed tasks locally. While suitable for smaller workloads, these systems face significant limitations when scaling to meet modern demands:

- Finite Capacity: Single machines have physical hardware limits.

- Susceptibility to Failures: A single point of failure can disrupt the entire system.

- Inflexible Scaling: Vertical scaling (adding resources to a single machine) is costly and has diminishing returns.

As the demand for processing vast datasets, ensuring global availability, and delivering low-latency experiences grew, these constraints necessitated a shift to distributed architectures. Distributed systems emerged to address these challenges by leveraging multiple machines to collaboratively perform tasks.

Microservices Architecture vs. Monolith

Distributed systems often adopt a microservices architecture, breaking applications into smaller, independent services that communicate over networks. This contrasts with traditional monolithic architectures, where all functionality resides in a single codebase. The shift offers:

- Scalability: Resources can dynamically scale to meet growing demands.

- Fault Tolerance: Failures in one service do not compromise the entire system.

- Performance: Tasks can be executed in parallel, reducing latency.

How Distributed Systems Work

The essence of distributed systems lies in the seamless coordination and communication among components to achieve a unified objective. Despite their decentralized nature, they aim to present a consistent and transparent experience to users. Achieving this requires a foundation of key mechanisms:

- Message Passing: Components exchange messages to synchronize tasks, share data, and coordinate actions. Protocols such as gRPC or REST facilitate these interactions.

- Replication: Data is duplicated across nodes to ensure availability and fault tolerance. If one node fails, another takes over seamlessly.

- Partitioning: Data and workloads are divided among nodes to optimize performance and balance loads.

- Consensus Algorithms: Protocols like Paxos and Raft ensure all nodes agree on a consistent system state, even during failures or network partitions.

Distributed System Design Principles

Distributed systems are guided by a set of design principles to manage the trade-offs inherent in decentralization and complexity:

- CAP Theorem: A system cannot simultaneously guarantee consistency, availability, and partition tolerance. Choices must be tailored to the application’s requirements.

- Example: DynamoDB prioritizes availability and partition tolerance, while Google Spanner emphasizes consistency and partition tolerance.

- Scalability: Systems scale horizontally by adding machines to handle increased loads, avoiding reliance on a single powerful machine.

- Example: Web applications distribute incoming traffic across server clusters using load balancers.

- Fault Tolerance: Distributed systems must account for hardware failures, network partitions, and software bugs by employing replication, redundancy, and self-healing mechanisms.

- Example: Consensus algorithms like Raft maintain state consistency even during failures.

- Transparency: Distributed systems abstract their underlying complexity, offering users a unified experience:

- Access Transparency: Users can access resources without knowing their location.

- Replication Transparency: Users remain unaware of data duplication across nodes.

- Failure Transparency: Failures are handled gracefully without service interruptions.

- ACID vs. BASE Paradigms: Traditional databases rely on ACID properties (Atomicity, Consistency, Isolation, Durability) for strong consistency. Distributed systems often adopt BASE (Basically Available, Soft State, Eventually Consistent) to optimize scalability and availability.

- Example: Cassandra uses BASE properties to provide eventual consistency for high-availability scenarios.

- Decentralization: Responsibility is distributed across nodes to avoid single points of failure and enhance parallelism.

- Example: Peer-to-peer networks like BitTorrent distribute tasks and data directly between nodes.

Consistency Models

Consistency models define how distributed systems synchronize data across nodes. These models balance trade-offs between correctness, performance, and availability:

- Strong Consistency: Guarantees that all nodes reflect the most recent write before any subsequent read.

- Use Case: Banking systems requiring strict accuracy in account balances.

- Eventual Consistency: Allows temporary inconsistencies but ensures that all nodes converge to the same state over time.

- Use Case: Social media platforms where user timelines tolerate slight delays in updates.

- Causal Consistency: Preserves the causal order of operations, ensuring dependent events are observed in the correct sequence.

- Use Case: Collaborative editing tools like Google Docs.

Consistency models, explored in detail later, are crucial for maintaining data reliability and fault tolerance in distributed systems.

Architectures, Patterns, and Tools

Distributed systems are not a one-size-fits-all solution. Their design depends on specific goals, workloads, and constraints. Some of the most common architectures include:

- Client-Server: A common model where centralized servers respond to requests from multiple clients. Think of web browsers requesting pages from web servers.

- Event-Driven: Systems react to events asynchronously, enabling high throughput and loose coupling between components. This is often used in real-time applications and stream processing.

- Microservices: Applications are broken down into small, independent services that communicate with each other. This promotes agility, scalability, and independent deployments.

Patterns such as Leader-Follower, Saga, and Event Sourcing provide frameworks to address common challenges, from transaction management to eventual consistency. Tools like Apache Kafka, Redis, and Kubernetes further simplify the implementation of distributed systems, offering solutions for messaging, in-memory data storage, and container orchestration.

In the following sections, we will deeply explore the architectures, patterns, tools, and techniques that empower distributed systems to achieve scalability, fault tolerance, data consistency, and resilience within complex and dynamic environments.

Architectures and Patterns in Distributed Systems

Building distributed systems involves more than just scattering components across multiple machines. It requires careful consideration of how these components interact, share data, and maintain consistency. Architectural patterns provide proven solutions to common challenges, enabling us to build robust, scalable, and maintainable distributed applications.

Core Principles of Distributed Domain Design

Data Ownership and Bounded Contexts

In a distributed system, clear ownership of data is paramount. Each service must have exclusive control over a specific domain of data, aligning with the principle of bounded contexts from Domain-Driven Design (DDD). This ensures that services remain autonomous and loosely coupled.

For instance, in an e-commerce platform, the ‘Product Catalog’ service owns all product-related data, while the ‘Order Management’ service owns order data. This separation ensures that each service can evolve independently without affecting others.

However, this separation also introduces challenges in accessing and maintaining consistency across different domains. Patterns like event-driven architectures and various data access strategies help address these challenges.

Event-Driven Architectures

Event-driven architectures (EDAs) play a vital role in modern distributed systems. By leveraging events for communication, services can operate independently, asynchronously, and with greater resilience. EDAs promote loose coupling by enabling services to communicate indirectly through events. This means services don’t need to know about each other directly, increasing flexibility and resilience.

This approach offers several benefits:

- Increased agility and scalability: Services can be developed, deployed, and scaled independently without affecting others.

- Improved responsiveness: Asynchronous communication allows for real-time reactions and prevents blocking.

- Enhanced fault tolerance: EDAs are more resilient to failures as services are decoupled.

- Better data insights: Event streams can be used for monitoring, analytics, and generating valuable business intelligence.

However, EDAs also introduce complexities that require careful consideration:

- Idempotency: Event handlers must be designed to handle duplicate events without unintended side effects. This is crucial because events might be redelivered due to network issues or retry mechanisms. Techniques like unique identifiers, versioning, and state checks help ensure idempotency.

- Event Enrichment: “Smart events” carry all the necessary context, reducing dependencies and latency. This means including relevant information within the event itself, minimizing the need for consumers to fetch additional data.

- Retention Policies: Determine how long event logs are stored. Balance factors like storage cost, debugging needs, and compliance requirements when defining retention policies.

Addressing these challenges is an ongoing process that requires careful planning, monitoring, and adaptation. However, the rewards of a well-designed EDA are significant, enabling the creation of truly agile and scalable systems.

Patterns in Distributed Domain Design

Inter-service Communication

Inter-service communication is the lifeblood of distributed systems, facilitating interactions between loosely coupled services. However, each communication style introduces distinct trade-offs:

- Synchronous Communication (Request-Response)

- Services interact directly through protocols like REST or gRPC, ensuring immediate feedback.

- Advantages: Simplicity in implementation; suitable for critical operations requiring immediate results.

- Challenges: Susceptibility to latency, network dependencies, and cascading failures if one service becomes unavailable. For example, a payment service querying a user service might block if the user service is slow or unresponsive.

- Asynchronous Communication (Event-Based)

- Services communicate indirectly by publishing events to a messaging system like Kafka or RabbitMQ.

- Advantages: Decoupling services enhances fault tolerance and scalability; services can function independently without waiting for immediate responses.

- Challenges: Ensuring eventual consistency across services, maintaining reliable message delivery, and debugging complex flows can be challenging. For instance, ensuring that an “order placed” event is processed reliably across inventory, payment, and notification services requires robust message handling mechanisms.

In essence, synchronous communication is suitable for operations requiring immediate feedback, such as user authentication or payment processing. Asynchronous communication is better suited for tasks that can be performed in the background, like sending email notifications or updating data warehouses.

Choosing the right communication strategy depends on the system’s requirements for responsiveness, fault tolerance, and complexity. Combining these approaches judiciously can help balance trade-offs and optimize system performance.

Distributed Consistency Models

Distributed consistency models define the guarantees a system provides about the state synchronization between its nodes or services. Each model represents a trade-off between availability, performance, and correctness, rooted in the CAP theorem (Consistency, Availability, Partition Tolerance).

Strong Consistency

A system achieves strong consistency when all nodes reflect the most recent write before any subsequent read. This ensures a single, global truth across the system but often comes at the expense of availability and latency.

- Applications:

- Banking Systems: Accurate updates to account balances.

- Distributed Ledgers: Ensuring strict ordering in blockchains.

Eventual Consistency

In eventual consistency, nodes may temporarily diverge in their state, but the system guarantees convergence over time. This model prioritizes availability and performance over immediate consistency.

- Applications:

- Content Delivery Networks (CDNs): Ensuring fast delivery of cached assets globally.

- Social Media Feeds: User timelines tolerate temporary inconsistencies.

Causal Consistency

Causal consistency ensures that operations with a causal relationship (e.g., a read dependent on a prior write) are observed in the correct order. Independent operations may still be observed in different orders across nodes.

- Applications:

- Collaborative Tools: Maintaining logical order in tools like Google Docs.

- Messaging Systems: Ensuring replies follow the corresponding messages.

Read-Your-Writes Consistency

This model ensures that a user sees the effects of their own writes. While it doesn’t enforce global consistency, it enhances the user experience in systems requiring immediacy.

- Applications:

- E-commerce Platforms: Immediate cart updates after item addition.

- User Profile Management: Ensuring profile changes reflect immediately for the same user.

Monotonic Read Consistency

Monotonic reads guarantee that once a user sees a version of the data, they will not see an older version in subsequent reads.

- Applications:

- Financial Dashboards: Ensuring users don’t see outdated transaction data.

- Monitoring Tools: Maintaining consistent visibility of system metrics.

Hybrid Approaches

Many distributed systems combine consistency models based on the operational context. For example:

- Google Spanner: Provides strong consistency globally using TrueTime but optimizes performance with relaxed write-latency policies.

- AWS DynamoDB: Offers tunable consistency, allowing us to choose between strong and eventual consistency for different queries.

Choosing the Right Consistency Model

Selecting a consistency model depends on the system’s requirements and tolerance for trade-offs:

- Critical Data Integrity: Opt for strong consistency for systems where correctness outweighs performance.

- Scalability and Performance: Eventual consistency is ideal for high-availability applications with tolerance for temporary inconsistencies.

- User-Centric Operations: Use read-your-writes or monotonic consistency for enhancing user experience in interactive systems.

- Contextual Ordering: Causal consistency suits collaborative environments or systems where operation order matters.

Distributed consistency models offer a spectrum of guarantees, each suited to different operational and business needs.

Data Access Patterns

When services require data from domains they do not own, effective patterns are essential to balance performance, consistency, and scalability. Each pattern has its trade-offs, suited to specific needs and constraints.

Column Schema Replication

Replicating selected columns from one service to another enables the requesting service to avoid frequent interservice calls.

- Benefits: Enhanced read performance and independence from external services during runtime.

- Trade-offs: Risk of data staleness and increased complexity in maintaining synchronized copies, especially when updates are frequent or asynchronous.

- Example: A Wishlist Service replicates product names and IDs from a Catalog Service to minimize read dependencies.

Replicated Caching

Utilizing distributed in-memory caches synchronized across services provides near-instant access to shared data.

- Benefits: Extremely low latency for read operations, decoupling runtime dependencies on source services.

- Trade-offs: Requires robust mechanisms for cache synchronization and can incur high memory usage if data volumes are significant.

- Example: An Order Processing Service caches customer details from a User Service to ensure uninterrupted operations even if the User Service is temporarily unavailable.

Interservice Querying

Directly querying data from another service is a straightforward approach for accessing up-to-date information.

- Benefits: Guarantees data consistency by retrieving real-time information.

- Trade-offs: Vulnerable to network latency and potential availability issues if the queried service experiences downtime.

- Example: A Payment Service retrieves live credit limits from a User Account Service before processing transactions.

Data Domain Sharing

Consolidating shared data into a unified schema accessible to multiple services facilitates efficient querying.

- Benefits: High performance for joint operations and natural data consistency due to shared ownership.

- Trade-offs: Broader bounded contexts may reduce isolation, complicate schema evolution, and limit independent scalability.

- Example: A shared schema combines ticket and user data for seamless reporting in an event management application.

Selecting the appropriate pattern requires careful consideration of system requirements, such as latency tolerance, scalability needs, and data update frequency.

Saga Patterns for Distributed Transactions

What Is a Saga?

In distributed systems, especially with microservices, managing workflows that span multiple independent services is challenging. Each service often owns its own data and operates autonomously, but real-world business processes—such as processing an order, handling payments, or shipping products—require coordination across these services. This is where Sagas come into play.

A Saga is a distributed workflow pattern designed to coordinate a series of transactions across multiple microservices, ensuring that all steps complete successfully or are rolled back if a failure occurs. Unlike monolithic transactions with ACID properties, Sagas operate under the principle of eventual consistency, leveraging compensating actions to handle failures.

Think of a Saga as a logical coordinator that orchestrates or choreographs the sequence of actions needed to complete a business process while maintaining system integrity.

Why Sagas Are Essential ?

While modern tools like Kubernetes ensure infrastructure reliability and data replication improves availability, they don’t address the complexities of distributed business workflows. Sagas step in to manage these critical challenges:

- Workflow Coordination: Sagas link discrete microservices into a cohesive process, ensuring each step flows logically into the next.

- Failure Recovery: Sagas define compensating actions to revert partially completed workflows in case of failure, preventing cascading errors.

- Long-Running Transactions: They enable workflows that span extended periods, involving retries, human intervention, or external systems.

- State Management: Sagas track the state of workflows, ensuring transitions between success and failure are consistent and well-defined.

How Sagas Work ?

Sagas typically implement one of two patterns:

Orchestration

- A central coordinator service (the orchestrator) manages the workflow by explicitly invoking each microservice in sequence and tracking progress.

- Advantages: Centralized control simplifies debugging and state management.

- Trade-Offs: The orchestrator becomes a single point of failure and a potential bottleneck.

- Example: In an e-commerce workflow, the orchestrator manages calls to payment, inventory, and shipping services, ensuring each step completes before moving to the next.

Choreography

- Microservices communicate indirectly by publishing and reacting to events on a message bus, with no central control.

- Advantages: Decentralized design improves scalability and fault tolerance.

- Trade-Offs: Harder to visualize and debug workflows due to distributed control.

- Example: When an “order placed” event is published, the payment service processes the payment, the inventory service reserves items, and the shipping service schedules delivery—all independently reacting to the same event.

Trade-Offs and Challenges

- Complexity: Implementing Sagas requires robust design to manage compensations, state transitions, and error handling.

- Performance: Eventual consistency means some delays in data synchronization.

- Debugging: Choreographed Sagas, in particular, are harder to trace due to their distributed nature.

- State Management: Tracking workflow progress can become challenging, especially for long-running Sagas.

Sagas are indispensable in distributed architectures where workflows span multiple services. By coordinating transactions, managing failures, and maintaining consistency, Sagas fill a critical gap left by data replication and infrastructure tools like Kubernetes.

CQRS: Command Query Responsibility Segregation

CQRS is an advanced architectural pattern that decouples write operations (commands) from read operations (queries) to address the distinct needs of distributed systems. This separation facilitates independent scaling, enhanced performance, and adaptability to diverse operational requirements.

Command Model

The Command Model handles state changes by enforcing business rules and generating immutable events that describe these changes.

- Instead of directly modifying state, commands produce events asynchronously, which are logged in an append-only store.

- The event log becomes the single source of truth, enabling state reconstruction and ensuring an auditable history of changes.

- Example: Updating a user’s address generates an

AddressUpdatedevent instead of altering the database record directly.

Query Model

The Query Model optimizes data retrieval by providing denormalized views tailored to read operations.

- Polyglot Persistence: Adopts storage technologies optimized for query requirements (e.g., Elasticsearch for text search, Redis for caching).

- Evolvability: Enables independent scaling and adaptation to specific read-use cases without impacting write functionality.

Benefits of CQRS in Distributed Systems

- Performance and Scalability:

- Commands and queries scale independently, accommodating workload-specific demands.

- Denormalized views reduce computational overhead by eliminating costly joins.

- Eventual Consistency:

- Prioritizes system availability, allowing read models to operate during synchronization delays.

- Auditability and Debugging:

- Event sourcing creates a comprehensive history of state transitions, aiding traceability and error resolution.

- Alignment with Domain-Driven Design (DDD):

- Decoupling models aligns with bounded contexts, making CQRS ideal for systems with complex domains.

Challenges and Trade-Offs

- System Complexity:

- Requires maintaining additional infrastructure such as event stores, message brokers, and multiple databases.

- Demands robust DevOps and monitoring strategies to ensure reliability.

- Consistency Overheads:

- Eventual Consistency: Read models might lag behind the latest state, creating transient discrepancies.

- Idempotency: Event handlers must tolerate duplicates to avoid unintended side effects.

- Data Duplication:

- Denormalized views duplicate data, increasing storage costs and necessitating careful schema evolution to prevent disruptions.

- Synchronization Latency:

- Query models may take time to reflect the latest state, potentially leading to user confusion.

- Example: An order placed by a user might not appear in their order history immediately due to propagation delays.

CQRS empowers distributed systems by tailoring operations to distinct read and write requirements. Its reliance on event-driven synchronization and decoupled models enables scalability, fault tolerance, and performance optimization. However, its adoption requires balancing complexity, eventual consistency, and synchronization trade-offs to achieve robust, user-centered solutions.

Tools and Technologies

Distributed systems rely on a rich ecosystem of tools and technologies to function effectively. These tools provide the essential building blocks for connecting services, managing communication, ensuring scalability, and maintaining consistency. From service meshes to message brokers and orchestration frameworks, understanding these technologies is crucial for building robust and efficient distributed applications.

Service Meshes: Managing Synchronous Communication

A service mesh is a dedicated infrastructure layer that facilitates synchronous communication between microservices. It abstracts the complexities of service-to-service interactions, providing features like load balancing, traffic routing, and security enforcement.

Key Components:

- Data Plane: A network of proxies (“sidecars”) deployed alongside each microservice. These proxies intercept all network traffic, handling tasks like service discovery, routing, load balancing, and security.

- Control Plane: A centralized interface for managing and configuring the data plane. It allows operators to define policies and rules that govern how the proxies behave.

Essentially, the data plane handles the “how” of communication, while the control plane handles the “what” and “why.” This separation provides a powerful and flexible way to manage complex microservice interactions.

Key Tools: Istio, Linkerd and Consul.

Advantages:

- Enhanced security via mTLS (mutual TLS).

- Automated load balancing and service discovery.

- Improved observability with distributed tracing and metrics collection.

Example Use Case: A retail application uses a service mesh to secure payment service calls with encryption, route requests to geographically closest servers, and ensure reliability through automated retries.

Message Brokers: Orchestrating Asynchronous Workflows

Message brokers act as intermediaries for asynchronous communication, decoupling components and ensuring reliable message delivery. They form the backbone of event-driven architectures.

Capabilities:

- Asynchronous Decoupling: Services operate independently, reducing coupling.

- Event Replay: Persistent logs allow replaying events for debugging or recovery.

- Scalability: Efficient handling of massive data streams.

Key Tools:

- Apache Kafka: Ideal for high-throughput, log-based systems.

- RabbitMQ: Excels in task distribution with complex routing needs.

- Pulsar: Lightweight, cloud-native, and optimized for multi-tenancy.

Example Use Case: A ride-sharing platform uses Kafka to manage real-time driver updates, enabling location tracking and dispatch notifications.

Service Mesh vs. Message Brokers

Modern distributed systems often employ both service meshes and message brokers to address distinct communication paradigms: synchronous and asynchronous. These tools serve complementary roles, enabling robust, scalable, and fault-tolerant architectures.

| Use Case | Service Mesh (Synchronous) | Message Brokers (Asynchronous) |

|---|---|---|

| Real-Time API Calls | Ideal for immediate data retrieval or action, such as gRPC or REST API calls. | Not suitable for real-time requirements due to inherent latency. |

| Background Processing | Unsuitable for workflows with high latency or non-blocking needs. | Perfect for decoupled processes like order fulfillment or batch jobs. |

| Fault Tolerance & Retries | Automatically retries failed API calls with features like circuit breaking. | Guarantees message delivery through persistent logs and durable queues. |

| Decoupling Services | Tight coupling; services must be online and accessible during interaction. | Loose coupling; services operate independently and asynchronously. |

| Security | Secures real-time communication via mTLS, authentication, and policy enforcement. | Secures message streams with encryption and access controls. |

| Scalability | Scales synchronous traffic with intelligent routing and load balancing. | Manages large-scale, distributed event workloads effectively. |

Most modern architectures combine both paradigms, using service meshes for real-time inter-service calls and message brokers for asynchronous workflows.

Orchestration Frameworks: Automating Workload Management

Orchestration frameworks manage the lifecycle of containerized workloads, automating deployment, scaling, and maintenance.

Key Features:

- Service Discovery: Assigns DNS-based names for services (e.g., Kubernetes Ingress).

- Autoscaling: Dynamically adjusts resources based on load (e.g., Horizontal Pod Autoscaling).

- Stateful Workloads: Manages persistent storage for databases (e.g., StatefulSets).

Popular Tools:

- Kubernetes: The leading orchestration framework for managing containerized applications.

- Docker Swarm: Lightweight and simpler for smaller-scale deployments.

Example Use Case: A video streaming service uses Kubernetes to scale encoding jobs during peak traffic and manage failover for database replicas.

Workflow Engines: Orchestrating Complex Business Processes

Workflow engines manage multi-step, long-running business processes that span multiple services, blending automation and manual interventions.

Key Capabilities:

- Stateful Management: Tracks the progress of workflows.

- Error Handling: Provides retries and rollbacks for failed operations.

- Human Tasks: Integrates manual approvals into automated workflows.

Popular Tools:

- Camunda: A BPMN-based engine for business and system task orchestration.

- Temporal: Specializes in fault-tolerant microservice workflows.

Example Use Case: An e-commerce platform uses Camunda to automate order fulfillment, integrating inventory checks, payment processing, and shipment tracking.

Distributed Storage Solutions

Distributed storage is crucial for managing data consistency, availability, and fault tolerance across multiple nodes:

| Category | Description | Examples | Strengths | Limitations | Use Cases |

|---|---|---|---|---|---|

| Relational Databases | Structured data with strong ACID guarantees. | PostgreSQL, MySQL | Strong consistency, transactional guarantees, complex SQL queries. | Limited scalability for high-write workloads; higher latency for distributed operations. | Financial systems, payment processing. |

| Document Stores | Flexible schemas for semi-structured data. | MongoDB, Couchbase | Dynamic schema, hierarchical/nested data support. | Limited transactional capabilities compared to RDBMS. | User profiles, content management. |

| Key-Value Stores | Ultra-fast access for key-based queries. | Redis, DynamoDB | Low latency, ideal for caching or session management. | Poor suitability for complex queries or large datasets. | API caching, session stores. |

| Columnar Databases | Optimized for write-heavy and time-series workloads. | Cassandra, HBase | High write throughput, horizontal scalability. | Limited query flexibility; eventual consistency. | IoT data, analytics pipelines. |

| Distributed File Systems | Large-scale, fault-tolerant file storage. | HDFS, Ceph | Massive scalability, replication for fault tolerance. | High latency, not suited for real-time operations. | Data lakes, batch processing. |

| Object Storage | Cloud-native storage for unstructured data. | Amazon S3, Google Cloud Storage | Infinite scalability, cost-effective for large objects. | High latency for random access patterns. | Static assets, backups, disaster recovery. |

| Graph Databases | Optimized for managing relationships in complex networks. | Neo4j, Amazon Neptune | Efficient relationship traversal, specialized query languages. | Limited scalability for non-graph workloads. | Social networks, recommendation systems. |

| Time-Series Databases | Designed for storing and querying time-stamped data. | InfluxDB, TimescaleDB | Fast writes and queries for temporal data, retention policies. | Narrow focus on time-series use cases. | IoT monitoring, financial dashboards. |

| Immutable Logs | Event-driven storage for maintaining a single source of truth. | Apache Kafka, EventStore | Replayable events, ideal for event-driven architectures. | High storage costs; limited query capabilities. | Event sourcing, debugging, state reconstruction. |

Selecting a storage solution requires balancing the trade-offs between scalability, cost, and complexity. A well-architected storage layer empowers distributed systems to meet performance demands while maintaining reliability and resilience.

Observability and Monitoring in Distributed Systems

Observability is fundamental to understanding and managing the performance and behavior of distributed systems.

Core Pillars:

- Metrics: Quantitative data (e.g., latency, throughput) collected using tools like Prometheus.

- Logs: Context-rich records of system events, managed by tools like Elastic Stack (ELK).

- Traces: End-to-end request tracking across services, visualized via OpenTelemetry or Jaeger.

Challenges:

- Data Volume: Managing and analyzing massive telemetry data.

- Distributed Tracing: Maintaining trace IDs across services.

Example Use Case: A fintech application integrates Prometheus for monitoring transaction rates, Jaeger for tracing API calls, and Grafana for dashboard visualization.

Distributed systems thrive on a symphony of tools tailored to specific challenges. By leveraging service meshes for synchronous interactions, message brokers for event-driven workflows, orchestration frameworks for managing workloads, and observability tools for performance insights, modern systems can achieve resilience, scalability, and efficiency.

Real-World Cases

Exploring real-world implementations of distributed systems provides valuable insights into their design, challenges, and solutions. Here are several notable case studies.

Airbnb System Design

Airbnb’s system design reflects a journey from a monolithic architecture to a service-oriented architecture (SOA), enabling them to scale their platform to millions of users and listings. This transition addressed challenges in scaling, developer velocity, and integration complexities as Airbnb grew.

Key architectural components include:

- Service Blocks: Grouped services that handle domain-specific tasks, such as user management, reservations, and listings. This promotes modularity and independent scaling.

- Data Aggregation Layer: Acts as middleware to simplify data fetching and improve developer efficiency. GraphQL plays a central role here, providing a unified schema for querying data from multiple services.

- Centralized and Decentralized Models: Utilizing distributed data centers and leader-follower models for database replication to minimize latency and support high availability.

- Caching and Storage: Airbnb avoids over-reliance on in-memory caches for freshness-critical data like listing availability, opting for distributed SQL solutions.

Airbnb’s architecture is guided by principles like modularity, abstraction, decoupling, and fault isolation. This ensures that the system is scalable, reliable, and easy to maintain.

Tools and Technologies:

Airbnb leverages a diverse set of tools to support its distributed architecture:

- GraphQL: Simplifies data fetching and aggregation from multiple services.

- Service Mesh (e.g., Istio, Linkerd): Enhances inter-service communication with features like load balancing, service discovery, and security.

- Elasticsearch: Powers efficient search functionality for listings.

- Kafka: Enables asynchronous communication and event-driven architecture.

- Schema Browser IDE: Improves developer experience with real-time schema validation.

- Monitoring and Observability Tools: Provides comprehensive monitoring and anomaly detection.

This combination of technologies allows Airbnb to achieve scalability, reliability, and developer efficiency in its complex platform. Airbnb’s system design balances the complexities of a global marketplace with the need for scalability, reliability, and user-centric functionality.

Netflix System Design

Netflix’s system design is a prime example of a distributed system built for scale and reliability, handling millions of users streaming video content globally.

Key Architectural Aspects:

- Open Connect CDN: A global network of servers that stores and delivers video content to users with low latency. This ensures smooth streaming experiences worldwide.

- Microservices Architecture: Netflix decomposes its functionalities into hundreds of independent microservices. This allows for independent scaling of different components (e.g., user authentication, recommendations, video playback) and fault isolation to prevent cascading failures.

- Hybrid Cloud Infrastructure: Netflix leverages Amazon Web Services (AWS) for its backend infrastructure but also utilizes its custom-built Open Connect CDN for content delivery. This combination provides both flexibility and optimized performance.

Tools and Technologies:

Netflix utilizes a wide array of tools to support its distributed architecture:

- Traffic Management: Elastic Load Balancers (ELBs) and the Zuul API Gateway route traffic efficiently and ensure high availability.

- Data Processing: Kafka handles real-time data streams, while Apache Chukwa collects and processes logs for analysis.

- Data Storage: Netflix uses a hybrid database approach with MySQL for transactional data and Cassandra for high-volume data like viewing history.

- Recommendation System: Apache Spark and machine learning algorithms power Netflix’s personalized recommendations.

- Fault Tolerance: Hystrix, a latency and fault tolerance library, isolates failures and prevents cascading issues.

By combining a robust architecture with carefully chosen technologies, Netflix has created a distributed system that delivers a seamless streaming experience to millions of users worldwide.

Uber System Design

Uber’s architecture has gone through several iterations to meet its growing demands. It started as a monolithic application but transitioned to a Service-Oriented Architecture (SOA) and later evolved into a Domain-Oriented Microservices Architecture (DOMA). This allowed them to break down functionalities into smaller, independent services, improving scalability, fault tolerance, and development velocity.

Key Architectural Aspects:

- Domain-Oriented Microservices (DOMA): Uber divides its system into domain-specific services, such as Rider Management, Driver Management, Trip Management, Payments, and Maps. This allows each service to focus on a specific area of functionality, making them easier to develop, deploy, and scale independently.

- Consistent Hashing: Uber uses consistent hashing to efficiently distribute ride requests to nearby drivers. This technique ensures that requests are evenly distributed and minimizes the impact of adding or removing servers.

- Geospatial Data Handling: Uber relies heavily on geospatial data to track driver locations, calculate ETAs, and optimize routes. They use efficient algorithms and data structures to handle this data at scale.

Tools and Technologies:

Uber utilizes a diverse set of technologies to power its platform:

- Real-time Communication: Kafka is used for real-time messaging between different components, enabling features like driver location updates and ride request notifications.

- Data Processing: Hadoop and Hive are used for batch processing of large datasets for analytics and reporting.

- Data Storage: Uber uses a combination of relational databases (like MySQL) for transactional data and NoSQL databases (like Cassandra) for high-volume data like ride history and driver location data.

- Caching: Redis is used extensively for caching frequently accessed data to improve performance and reduce latency.

- Maps and Routing: Uber uses sophisticated mapping technologies and algorithms (like the Google S2 library and Dijkstra’s algorithm) to provide accurate ETAs, optimize routes, and handle dynamic pricing.

Uber’s system design is a testament to how a complex real-world problem can be solved using a well-designed distributed system. By combining a robust architecture with carefully chosen technologies, Uber has created a platform that efficiently connects riders and drivers worldwide.

Emerging Trends

Serverless and Function-as-a-Service

Serverless computing revolutionizes the way applications are built and deployed by abstracting infrastructure management. We can focus solely on writing business logic, while cloud providers handle the provisioning, scaling, and maintenance of servers. Function-as-a-Service (FaaS), a subset of serverless computing, enables the execution of discrete functions in response to specific events, offering unparalleled scalability and cost efficiency.

Key Features of Serverless Computing:

- Elastic Scaling: Automatically adjusts to workload demands.

- Event-Driven: Functions execute in response to triggers (e.g., HTTP requests, file uploads).

- Pay-as-You-Go: Billing is based on execution time and resource consumption.

- Statelessness: Functions operate independently, promoting simplicity and scalability.

Advantages of Serverless and FaaS:

- Reduced Overhead: No server management required.

- Cost Efficiency: Only pay for actual usage, eliminating idle costs.

- Rapid Development: Simplified deployment accelerates time-to-market.

- Resilience: High availability and fault tolerance are built into serverless platforms.

Use Cases:

- Real-Time Event Handling: IoT, notifications, and user actions.

- API Backends: Scalable endpoints for applications.

- Data Processing: Pipelines for logs, files, and analytics.

- Microservices: Modular, event-triggered functionalities.

Serverless computing is optimal for intermittent workloads, prototyping, and scaling, making it a core component of modern, cost-efficient architectures.

AI in Distributed Systems

Artificial Intelligence (AI) is revolutionizing distributed systems by enhancing intelligence, efficiency, and resilience.

Core Enhancements:

- Predictive Analytics: Anticipates traffic spikes, optimizes resources, and foresees system failures.

- Intelligent Caching: Adapts caching strategies using machine learning for improved performance.

- Fault Detection and Recovery: AI-driven anomaly detection accelerates failure mitigation.

Applications:

- Autonomous Load Balancing: Distributes traffic dynamically across services for optimized performance.

- Enhanced Search and Recommendations: AI pipelines enable personalized and efficient search results.

- Dynamic Pricing and Routing: Optimizes logistics and user experiences in real-time, as seen in ride-sharing platforms like Uber.

By integrating AI, distributed systems become increasingly autonomous and adaptive, enabling them to meet the demands of modern, complex environments with greater precision and efficiency.

Reaching the Summit

This exploration has taken us through the foundational principles, architectures, and tools that underpin the world of distributed systems. From their evolution beyond basic client-server models to the intricate microservices architectures of today, distributed systems have been driven by the relentless need for scalability, resilience, and efficiency in modern applications.

Key takeaways include:

- Core Concepts: A solid grasp of data consistency, fault tolerance, and network latency remains vital to designing reliable systems.

- Architectural Patterns: Strategies like event-driven architectures, Sagas, and CQRS address the unique challenges of distributed environments.

- Critical Tools: Service meshes, message brokers, and orchestration frameworks play a pivotal role in managing complexity and ensuring system reliability.

- Emerging Trends: Innovations such as serverless computing and AI-driven optimization are shaping the next wave of distributed architectures.

Looking forward, distributed systems will continue to evolve, driven by transformative trends such as:

- Edge Computing: The shift toward processing data closer to its source to meet low-latency demands.

- Real-Time Applications: Increasing requirements for seamless, instantaneous user experiences.

- Security and Privacy: Addressing the challenges of safeguarding distributed environments.

- AI and Automation: Leveraging machine learning to optimize and automate system operations.

As the landscape of distributed systems grows more complex, a deep understanding of their principles and technologies will be essential for engineers and architects. By embracing innovation and confronting challenges with a forward-thinking mindset, we can harness the full potential of distributed computing, unlocking new possibilities for connectivity and intelligence in a rapidly evolving world.

Distributed systems are not just a technological marvel; they are the engine powering innovation in diverse fields, from e-commerce and finance to healthcare and scientific research. So, it’s time to embrace the challenge, explore the possibilities, and contribute to the ongoing evolution of distributed computing!

Leave a Reply